- Google DeepMind released Gemini Robotics-ER 1.6 on April 14, 2026, targeting embodied reasoning for real-world robots.

- Instrument-reading success climbs from 23% to 93% when paired with agentic vision — a step-change for factory inspection.

- New multi-view understanding fuses multiple camera streams, unlocking manipulation tasks previous single-frame models failed at.

- Distributed via the Gemini API and Google AI Studio with Colab samples, making it immediately reachable for developer teams.

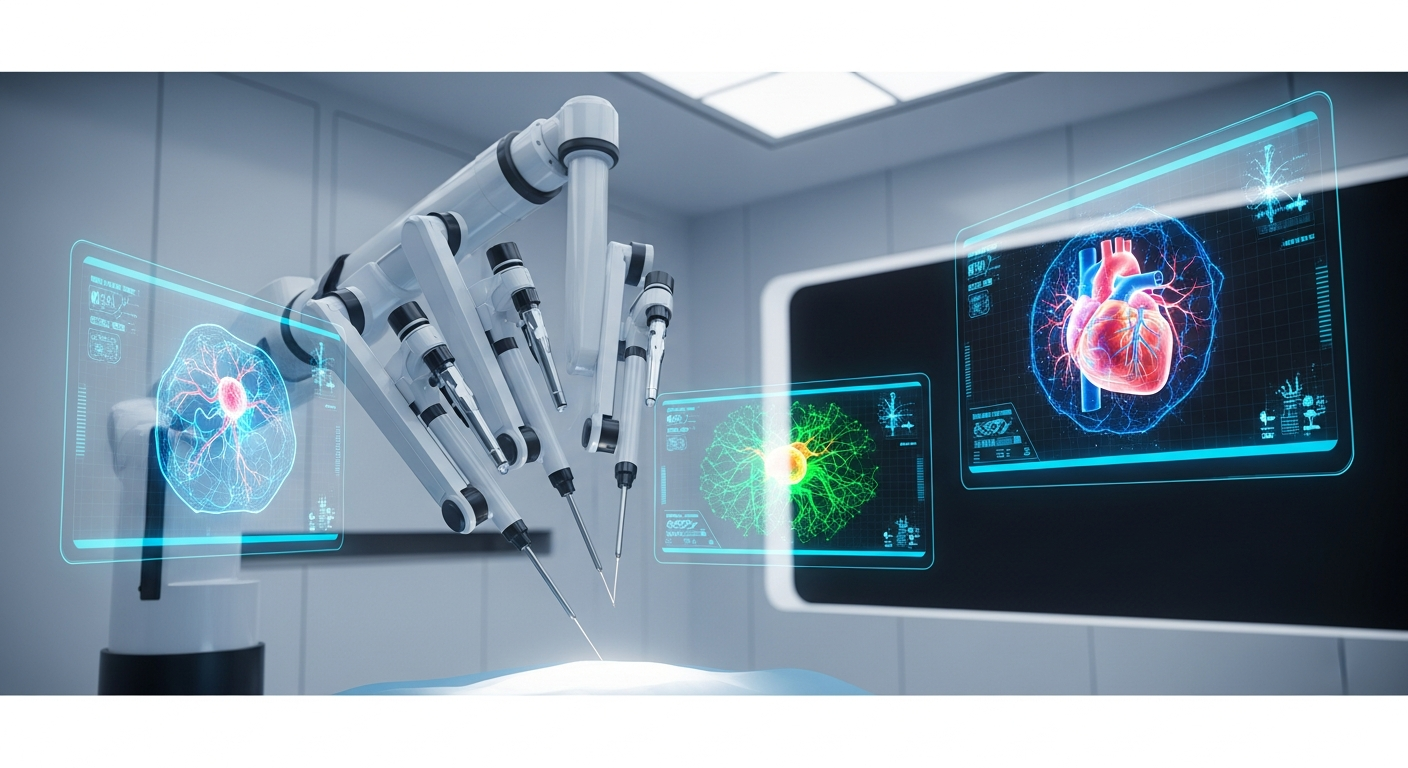

Google DeepMind quietly shipped one of the most consequential robotics updates of the year yesterday. Gemini Robotics-ER 1.6 — the ER stands for embodied reasoning — is a vision-language model tuned specifically for the messy, multi-angle, instrument-laden reality of physical work. The numbers in the release brief are not incremental: a jump from 23% to 93% on instrument reading when combined with agentic vision is the kind of threshold crossing that turns a research demo into a procurement conversation.

What Changed in 1.6

Multi-View Understanding

Prior robotics VLMs largely reasoned over a single camera feed at a time, forcing developers to stitch viewpoints together in application code. Version 1.6 natively ingests multiple synchronized streams, enabling tasks like finding a loose valve visible from the overhead camera but obstructed in the wrist camera. This aligns the model with how industrial cells are actually instrumented.

Instrument Reading and Success Detection

Reading gauges, sight glasses, and digital displays has been a notorious blind spot for generalist VLMs. The reported 93% success rate with agentic vision — a 70-point absolute lift over the prior release — directly targets the inspection, utilities, and process-manufacturing segments. Paired with improved success detection, robots can now close their own verification loops rather than requiring a human confirmation step.

AI Biz Insider Analysis ― The 23%-to-93% jump is the headline, but the quieter story is distribution: by shipping via the Gemini API rather than a bespoke robotics SDK, DeepMind is betting that the next wave of industrial AI integrators will be app developers, not robotics specialists. That reframes the competitive landscape against NVIDIA Isaac and Figure’s proprietary stacks.

Why It Matters for Enterprise Buyers

From Demo to Deployment

Facility inspection, gauge monitoring, and multi-camera quality control are the three use cases DeepMind foregrounds — and they happen to be exactly the workflows that manufacturing operators have been prototyping in-house for 18 months. A 93% reading accuracy is inside the band where pilots typically convert to rollouts, assuming remaining errors are safely caught by human-in-the-loop review.

Adversarial Safety Hardening

DeepMind also highlights improved compliance on adversarial spatial-reasoning prompts. For regulated industries this matters almost as much as raw accuracy: audit teams need evidence that the model will refuse unsafe physical actions rather than confabulate a plausible one. The adversarial evaluation suite is the piece procurement lawyers will want to read in full before signing.

Related

- Anthropic’s LTBT Secures Board Majority as Novartis CEO Vas Narasimhan Joins

- Google Launches Chrome Skills — AI Workflows Saved to Your Browser

- Claude Code Ultraplan, Musk-OpenAI Trial, Anthropic-CoreWeave GPU Deal

- AI Industry Tonight — April 11, 2026: Lawsuits, Probes, and Platform Bans

Sources

- Google DeepMind — Gemini Robotics-ER 1.6 (April 14, 2026)

- Google — Gemma 4 Open Models Announcement (April 2, 2026)

AI Biz Insider · AI Trends EN · aibizinsider.com

댓글 남기기